Table of Contents

- 14.1 Comparing Transaction and Nontransaction Engines

- 14.2 Other Storage Engines

- 14.3 Setting the Storage Engine

- 14.4 Overview of MySQL Storage Engine Architecture

- 14.5 The MyISAM Storage Engine

- 14.6 The InnoDB Storage Engine

- 14.6.1 Introduction to InnoDB

- 14.6.2 InnoDB Configuration

- 14.6.3 InnoDB Concepts and Architecture

- 14.6.4 InnoDB Tablespace Management

- 14.6.5 InnoDB Table Management

- 14.6.6 InnoDB Disk I/O and File Space Management

- 14.6.7 InnoDB Startup Options and System Variables

- 14.6.8 InnoDB Performance Tuning Tips

- 14.6.9 InnoDB Monitors

- 14.6.10 InnoDB Backup and Recovery

- 14.6.11 InnoDB and MySQL Replication

- 14.6.12 InnoDB Troubleshooting

- 14.7 The IBMDB2I Storage Engine

- 14.8 The MERGE Storage Engine

- 14.9 The MEMORY Storage Engine

- 14.10 The EXAMPLE Storage Engine

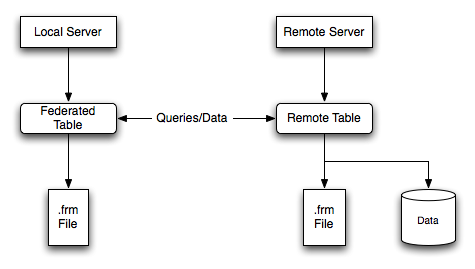

- 14.11 The FEDERATED Storage Engine

- 14.12 The ARCHIVE Storage Engine

- 14.13 The CSV Storage Engine

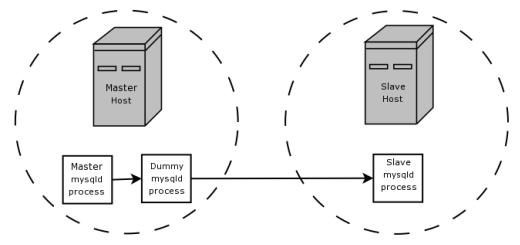

- 14.14 The BLACKHOLE Storage Engine

MySQL supports several storage engines that act as handlers for different table types. MySQL storage engines include both those that handle transaction-safe tables and those that handle nontransaction-safe tables.

As of MySQL 5.1, MySQL Server uses a pluggable storage engine architecture that enables storage engines to be loaded into and unloaded from a running MySQL server.

Prior to MySQL 5.1.38, the pluggable storage engine architecture is supported on Unix platforms only and pluggable storage engines are not supported on Windows.

To determine which storage engines your server supports by using the

SHOW ENGINES statement. The value in

the Support column indicates whether an engine

can be used. A value of YES,

NO, or DEFAULT indicates that

an engine is available, not available, or available and currently

set as the default storage engine.

mysql> SHOW ENGINES\G

*************************** 1. row ***************************

Engine: FEDERATED

Support: NO

Comment: Federated MySQL storage engine

Transactions: NULL

XA: NULL

Savepoints: NULL

*************************** 2. row ***************************

Engine: MRG_MYISAM

Support: YES

Comment: Collection of identical MyISAM tables

Transactions: NO

XA: NO

Savepoints: NO

*************************** 3. row ***************************

Engine: MyISAM

Support: DEFAULT

Comment: Default engine as of MySQL 3.23 with great performance

Transactions: NO

XA: NO

Savepoints: NO

...

This chapter describes each of the MySQL storage engines except for

NDBCLUSTER, which is covered in

Chapter 17, MySQL Cluster NDB 6.1 - 7.1. It also contains a description of

the pluggable storage engine architecture (see

Section 14.4, “Overview of MySQL Storage Engine Architecture”).

For information about storage engine support offered in commercial MySQL Server binaries, see MySQL Enterprise Server 5.1, on the MySQL Web site. The storage engines available might depend on which edition of Enterprise Server you are using.

For answers to some commonly asked questions about MySQL storage engines, see Section A.2, “MySQL 5.1 FAQ: Storage Engines”.

MySQL 5.1 supported storage engines

MyISAM: The default MySQL storage engine and the one that is used the most in Web, data warehousing, and other application environments.MyISAMis supported in all MySQL configurations, and is the default storage engine unless you have configured MySQL to use a different one by default.InnoDB: A transaction-safe (ACID compliant) storage engine for MySQL that has commit, rollback, and crash-recovery capabilities to protect user data.InnoDBrow-level locking (without escalation to coarser granularity locks) and Oracle-style consistent nonlocking reads increase multi-user concurrency and performance.InnoDBstores user data in clustered indexes to reduce I/O for common queries based on primary keys. To maintain data integrity,InnoDBalso supportsFOREIGN KEYreferential-integrity constraints.Memory: Stores all data in RAM for extremely fast access in environments that require quick lookups of reference and other like data. This engine was formerly known as theHEAPengine.Merge: Enables a MySQL DBA or developer to logically group a series of identicalMyISAMtables and reference them as one object. Good for VLDB environments such as data warehousing.Archive: Provides the perfect solution for storing and retrieving large amounts of seldom-referenced historical, archived, or security audit information.Federated: Offers the ability to link separate MySQL servers to create one logical database from many physical servers. Very good for distributed or data mart environments.NDB(also known asNDBCLUSTER)—This clustered database engine is particularly suited for applications that require the highest possible degree of uptime and availability.NoteThe

NDBstorage engine is not supported in standard MySQL 5.1 releases. Currently supported MySQL Cluster releases include MySQL Cluster NDB 7.0 and MySQL Cluster NDB 7.1, which are based on MySQL 5.1, and MySQL Cluster NDB 7.2, which is based on MySQL 5.5. While based on MySQL Server, these releases also contain support forNDB.CSV: The CSV storage engine stores data in text files using comma-separated values format. You can use the CSV engine to easily exchange data between other software and applications that can import and export in CSV format.Blackhole: The Blackhole storage engine accepts but does not store data and retrievals always return an empty set. The functionality can be used in distributed database design where data is automatically replicated, but not stored locally.Example: The Example storage engine is “stub” engine that does nothing. You can create tables with this engine, but no data can be stored in them or retrieved from them. The purpose of this engine is to serve as an example in the MySQL source code that illustrates how to begin writing new storage engines. As such, it is primarily of interest to developers.

It is important to remember that you are not restricted to using the same storage engine for an entire server or schema: you can use a different storage engine for each table in your schema.

Choosing a Storage Engine

The various storage engines provided with MySQL are designed with different use cases in mind. To use the pluggable storage architecture effectively, it is good to have an idea of the advantages and disadvantages of the various storage engines. The following table provides an overview of some storage engines provided with MySQL:

Table 14.1 Storage Engine Features

| Feature | MyISAM | Memory | InnoDB | Archive | NDB |

|---|---|---|---|---|---|

| Storage limits | 256TB | RAM | 64TB | None | 384EB |

| Transactions | No | No | Yes | No | Yes |

| Locking granularity | Table | Table | Row | Table | Row |

| MVCC | No | No | Yes | No | No |

| Geospatial data type support | Yes | No | Yes | Yes | Yes |

| Geospatial indexing support | Yes | No | Yes[a] | No | No |

| B-tree indexes | Yes | Yes | Yes | No | No |

| T-tree indexes | No | No | No | No | Yes |

| Hash indexes | No | Yes | No[b] | No | Yes |

| Full-text search indexes | Yes | No | Yes[c] | No | No |

| Clustered indexes | No | No | Yes | No | No |

| Data caches | No | N/A | Yes | No | Yes |

| Index caches | Yes | N/A | Yes | No | Yes |

| Compressed data | Yes[d] | No | Yes[e] | Yes | No |

| Encrypted data[f] | Yes | Yes | Yes | Yes | Yes |

| Cluster database support | No | No | No | No | Yes |

| Replication support[g] | Yes | Yes | Yes | Yes | Yes |

| Foreign key support | No | No | Yes | No | No |

| Backup / point-in-time recovery[h] | Yes | Yes | Yes | Yes | Yes |

| Query cache support | Yes | Yes | Yes | Yes | Yes |

| Update statistics for data dictionary | Yes | Yes | Yes | Yes | Yes |

|

[a] InnoDB support for geospatial indexing is available in MySQL 5.7.5 and higher. [b] InnoDB utilizes hash indexes internally for its Adaptive Hash Index feature. [c] InnoDB support for FULLTEXT indexes is available in MySQL 5.6.4 and higher. [d] Compressed MyISAM tables are supported only when using the compressed row format. Tables using the compressed row format with MyISAM are read only. [e] Compressed InnoDB tables require the InnoDB Barracuda file format. [f] Implemented in the server (via encryption functions), rather than in the storage engine. [g] Implemented in the server, rather than in the storage engine. [h] Implemented in the server, rather than in the storage engine. | |||||

Transaction-safe tables (TSTs) have several advantages over nontransaction-safe tables (NTSTs):

They are safer. Even if MySQL crashes or you get hardware problems, you can get your data back, either by automatic recovery or from a backup plus the transaction log.

You can combine many statements and accept them all at the same time with the

COMMITstatement (if autocommit is disabled).You can execute

ROLLBACKto ignore your changes (if autocommit is disabled).If an update fails, all of your changes are reverted. (With nontransaction-safe tables, all changes that have taken place are permanent.)

Transaction-safe storage engines can provide better concurrency for tables that get many updates concurrently with reads.

You can combine transaction-safe and nontransaction-safe tables in the same statements to get the best of both worlds. However, although MySQL supports several transaction-safe storage engines, for best results, you should not mix different storage engines within a transaction with autocommit disabled. For example, if you do this, changes to nontransaction-safe tables still are committed immediately and cannot be rolled back. For information about this and other problems that can occur in transactions that use mixed storage engines, see Section 13.3.1, “START TRANSACTION, COMMIT, and ROLLBACK Syntax”.

Nontransaction-safe tables have several advantages of their own, all of which occur because there is no transaction overhead:

Much faster

Lower disk space requirements

Less memory required to perform updates

Other storage engines may be available from third parties and community members that have used the Custom Storage Engine interface.

Third party engines are not supported by MySQL. For further information, documentation, installation guides, bug reporting or for any help or assistance with these engines, please contact the developer of the engine directly.

For more information on developing a customer storage engine that can be used with the Pluggable Storage Engine Architecture, see MySQL Internals: Writing a Custom Storage Engine.

When you create a new table, you can specify which storage engine

to use by adding an ENGINE table option to the

CREATE TABLE statement:

CREATE TABLE t (i INT) ENGINE = INNODB;

If you omit the ENGINE option, the default

storage engine is used. Normally, this is

MyISAM, but you can change it by using the

--default-storage-engine server

startup option, or by setting the

default-storage-engine option in the

my.cnf configuration file.

You can set the default storage engine to be used during the

current session by setting the

storage_engine variable:

SET storage_engine=MYISAM;

When MySQL is installed on Windows using the MySQL Configuration

Wizard, the InnoDB or MyISAM

storage engine can be selected as the default. See

Section 2.3.5.5, “MySQL Server Instance Config Wizard: The Database Usage Dialog”.

To convert a table from one storage engine to another, use an

ALTER TABLE statement that

indicates the new engine:

ALTER TABLE t ENGINE = MYISAM;

See Section 13.1.17, “CREATE TABLE Syntax”, and Section 13.1.7, “ALTER TABLE Syntax”.

If you try to use a storage engine that is not compiled in or that is compiled in but deactivated, MySQL instead creates a table using the default storage engine. This behavior is convenient when you want to copy tables between MySQL servers that support different storage engines. (For example, in a replication setup, perhaps your master server supports transactional storage engines for increased safety, but the slave servers use only nontransactional storage engines for greater speed.)

This automatic substitution of the default storage engine for

unavailable engines can be confusing for new MySQL users. A

warning is generated whenever a storage engine is automatically

changed. To prevent this from happening if the desired engine is

unavailable, enable the

NO_ENGINE_SUBSTITUTION SQL mode.

In this case, an error occurs instead of a warning and the table

is not created or altered if the desired engine is unavailable.

See Section 5.1.7, “Server SQL Modes”.

For new tables, MySQL always creates an .frm

file to hold the table and column definitions. The table's index

and data may be stored in one or more other files, depending on

the storage engine. The server creates the

.frm file above the storage engine level.

Individual storage engines create any additional files required

for the tables that they manage. If a table name contains special

characters, the names for the table files contain encoded versions

of those characters as described in

Section 9.2.3, “Mapping of Identifiers to File Names”.

A database may contain tables of different types. That is, tables need not all be created with the same storage engine.

The MySQL pluggable storage engine architecture enables a database professional to select a specialized storage engine for a particular application need while being completely shielded from the need to manage any specific application coding requirements. The MySQL server architecture isolates the application programmer and DBA from all of the low-level implementation details at the storage level, providing a consistent and easy application model and API. Thus, although there are different capabilities across different storage engines, the application is shielded from these differences.

The pluggable storage engine architecture provides a standard set of management and support services that are common among all underlying storage engines. The storage engines themselves are the components of the database server that actually perform actions on the underlying data that is maintained at the physical server level.

This efficient and modular architecture provides huge benefits for those wishing to specifically target a particular application need—such as data warehousing, transaction processing, or high availability situations—while enjoying the advantage of utilizing a set of interfaces and services that are independent of any one storage engine.

The application programmer and DBA interact with the MySQL database through Connector APIs and service layers that are above the storage engines. If application changes bring about requirements that demand the underlying storage engine change, or that one or more storage engines be added to support new needs, no significant coding or process changes are required to make things work. The MySQL server architecture shields the application from the underlying complexity of the storage engine by presenting a consistent and easy-to-use API that applies across storage engines.

As of MySQL 5.1, MySQL Server uses a pluggable storage engine architecture that enables storage engines to be loaded into and unloaded from a running MySQL server.

Prior to MySQL 5.1.38, the pluggable storage engine architecture is supported on Unix platforms only and pluggable storage engines are not supported on Windows.

Plugging in a Storage Engine

Before a storage engine can be used, the storage engine plugin

shared library must be loaded into MySQL using the

INSTALL PLUGIN statement. For

example, if the EXAMPLE engine plugin is

named example and the shared library is named

ha_example.so, you load it with the

following statement:

mysql> INSTALL PLUGIN example SONAME 'ha_example.so';

To install a pluggable storage engine, the plugin file must be

located in the MySQL plugin directory, and the user issuing the

INSTALL PLUGIN statement must

have INSERT privilege for the

mysql.plugin table.

The shared library must be located in the MySQL server plugin

directory, the location of which is given by the

plugin_dir system variable.

Unplugging a Storage Engine

To unplug a storage engine, use the

UNINSTALL PLUGIN statement:

mysql> UNINSTALL PLUGIN example;

If you unplug a storage engine that is needed by existing tables, those tables become inaccessible, but will still be present on disk (where applicable). Ensure that there are no tables using a storage engine before you unplug the storage engine.

A MySQL pluggable storage engine is the component in the MySQL database server that is responsible for performing the actual data I/O operations for a database as well as enabling and enforcing certain feature sets that target a specific application need. A major benefit of using specific storage engines is that you are only delivered the features needed for a particular application, and therefore you have less system overhead in the database, with the end result being more efficient and higher database performance. This is one of the reasons that MySQL has always been known to have such high performance, matching or beating proprietary monolithic databases in industry standard benchmarks.

From a technical perspective, what are some of the unique supporting infrastructure components that are in a storage engine? Some of the key feature differentiations include:

Concurrency: Some applications have more granular lock requirements (such as row-level locks) than others. Choosing the right locking strategy can reduce overhead and therefore improve overall performance. This area also includes support for capabilities such as multi-version concurrency control or “snapshot” read.

Transaction Support: Not every application needs transactions, but for those that do, there are very well defined requirements such as ACID compliance and more.

Referential Integrity: The need to have the server enforce relational database referential integrity through DDL defined foreign keys.

Physical Storage: This involves everything from the overall page size for tables and indexes as well as the format used for storing data to physical disk.

Index Support: Different application scenarios tend to benefit from different index strategies. Each storage engine generally has its own indexing methods, although some (such as B-tree indexes) are common to nearly all engines.

Memory Caches: Different applications respond better to some memory caching strategies than others, so although some memory caches are common to all storage engines (such as those used for user connections or MySQL's high-speed Query Cache), others are uniquely defined only when a particular storage engine is put in play.

Performance Aids: This includes multiple I/O threads for parallel operations, thread concurrency, database checkpointing, bulk insert handling, and more.

Miscellaneous Target Features: This may include support for geospatial operations, security restrictions for certain data manipulation operations, and other similar features.

Each set of the pluggable storage engine infrastructure components are designed to offer a selective set of benefits for a particular application. Conversely, avoiding a set of component features helps reduce unnecessary overhead. It stands to reason that understanding a particular application's set of requirements and selecting the proper MySQL storage engine can have a dramatic impact on overall system efficiency and performance.

MyISAM is the default storage engine. It is based

on the older (and no longer available) ISAM

storage engine but has many useful extensions.

Table 14.2 MyISAM Storage Engine

Features

| Storage limits | 256TB | Transactions | No | Locking granularity | Table |

| MVCC | No | Geospatial data type support | Yes | Geospatial indexing support | Yes |

| B-tree indexes | Yes | T-tree indexes | No | Hash indexes | No |

| Full-text search indexes | Yes | Clustered indexes | No | Data caches | No |

| Index caches | Yes | Compressed data | Yes[a] | Encrypted data[b] | Yes |

| Cluster database support | No | Replication support[c] | Yes | Foreign key support | No |

| Backup / point-in-time recovery[d] | Yes | Query cache support | Yes | Update statistics for data dictionary | Yes |

|

[a] Compressed MyISAM tables are supported only when using the compressed row format. Tables using the compressed row format with MyISAM are read only. [b] Implemented in the server (via encryption functions), rather than in the storage engine. [c] Implemented in the server, rather than in the storage engine. [d] Implemented in the server, rather than in the storage engine. | |||||

Each MyISAM table is stored on disk in three

files. The files have names that begin with the table name and have

an extension to indicate the file type. An .frm

file stores the table format. The data file has an

.MYD (MYData) extension. The

index file has an .MYI

(MYIndex) extension.

To specify explicitly that you want a MyISAM

table, indicate that with an ENGINE table option:

CREATE TABLE t (i INT) ENGINE = MYISAM;

Normally, it is unnecessary to use ENGINE to

specify the MyISAM storage engine.

MyISAM is the default engine unless the default

has been changed. To ensure that MyISAM is used

in situations where the default might have been changed, include the

ENGINE option explicitly.

You can check or repair MyISAM tables with the

mysqlcheck client or myisamchk

utility. You can also compress MyISAM tables with

myisampack to take up much less space. See

Section 4.5.3, “mysqlcheck — A Table Maintenance Program”, Section 4.6.3, “myisamchk — MyISAM Table-Maintenance Utility”, and

Section 4.6.5, “myisampack — Generate Compressed, Read-Only MyISAM Tables”.

MyISAM tables have the following characteristics:

All data values are stored with the low byte first. This makes the data machine and operating system independent. The only requirements for binary portability are that the machine uses two's-complement signed integers and IEEE floating-point format. These requirements are widely used among mainstream machines. Binary compatibility might not be applicable to embedded systems, which sometimes have peculiar processors.

There is no significant speed penalty for storing data low byte first; the bytes in a table row normally are unaligned and it takes little more processing to read an unaligned byte in order than in reverse order. Also, the code in the server that fetches column values is not time critical compared to other code.

All numeric key values are stored with the high byte first to permit better index compression.

Large files (up to 63-bit file length) are supported on file systems and operating systems that support large files.

There is a limit of 232 (~4.295E+09) rows in a

MyISAMtable. If you build MySQL with the--with-big-tablesoption, the row limitation is increased to (232)2 (1.844E+19) rows. See Section 2.11.4, “MySQL Source-Configuration Options”. Binary distributions for Unix and Linux are built with this option.The maximum number of indexes per

MyISAMtable is 64. This can be changed by recompiling. Beginning with MySQL 5.1.4, you can configure the build by invoking configure with the--with-max-indexes=option, whereNNis the maximum number of indexes to permit perMyISAMtable.Nmust be less than or equal to 128. Before MySQL 5.1.4, you must change the source.The maximum number of columns per index is 16.

The maximum key length is 1000 bytes. This can also be changed by changing the source and recompiling. For the case of a key longer than 250 bytes, a larger key block size than the default of 1024 bytes is used.

When rows are inserted in sorted order (as when you are using an

AUTO_INCREMENTcolumn), the index tree is split so that the high node only contains one key. This improves space utilization in the index tree.Internal handling of one

AUTO_INCREMENTcolumn per table is supported.MyISAMautomatically updates this column forINSERTandUPDATEoperations. This makesAUTO_INCREMENTcolumns faster (at least 10%). Values at the top of the sequence are not reused after being deleted. (When anAUTO_INCREMENTcolumn is defined as the last column of a multiple-column index, reuse of values deleted from the top of a sequence does occur.) TheAUTO_INCREMENTvalue can be reset withALTER TABLEor myisamchk.Dynamic-sized rows are much less fragmented when mixing deletes with updates and inserts. This is done by automatically combining adjacent deleted blocks and by extending blocks if the next block is deleted.

MyISAMsupports concurrent inserts: If a table has no free blocks in the middle of the data file, you canINSERTnew rows into it at the same time that other threads are reading from the table. A free block can occur as a result of deleting rows or an update of a dynamic length row with more data than its current contents. When all free blocks are used up (filled in), future inserts become concurrent again. See Section 8.7.3, “Concurrent Inserts”.You can put the data file and index file in different directories on different physical devices to get more speed with the

DATA DIRECTORYandINDEX DIRECTORYtable options toCREATE TABLE. See Section 13.1.17, “CREATE TABLE Syntax”.NULLvalues are permitted in indexed columns. This takes 0 to 1 bytes per key.Each character column can have a different character set. See Section 10.1, “Character Set Support”.

There is a flag in the

MyISAMindex file that indicates whether the table was closed correctly. If mysqld is started with the--myisam-recoveroption,MyISAMtables are automatically checked when opened, and are repaired if the table wasn't closed properly.myisamchk marks tables as checked if you run it with the

--update-stateoption. myisamchk --fast checks only those tables that don't have this mark.myisamchk --analyze stores statistics for portions of keys, as well as for entire keys.

myisampack can pack

BLOBandVARCHARcolumns.

MyISAM also supports the following features:

Additional Resources

A forum dedicated to the

MyISAMstorage engine is available at http://forums.mysql.com/list.php?21.

The following options to mysqld can be used to

change the behavior of MyISAM tables. For

additional information, see Section 5.1.3, “Server Command Options”.

Table 14.3 MyISAM Option/Variable Reference

| Name | Cmd-Line | Option File | System Var | Status Var | Var Scope | Dynamic |

|---|---|---|---|---|---|---|

| bulk_insert_buffer_size | Yes | Yes | Yes | Both | Yes | |

| concurrent_insert | Yes | Yes | Yes | Global | Yes | |

| delay-key-write | Yes | Yes | Global | Yes | ||

| - Variable: delay_key_write | Yes | Global | Yes | |||

| have_rtree_keys | Yes | Global | No | |||

| key_buffer_size | Yes | Yes | Yes | Global | Yes | |

| log-isam | Yes | Yes | ||||

| myisam-block-size | Yes | Yes | ||||

| myisam_data_pointer_size | Yes | Yes | Yes | Global | Yes | |

| myisam_max_sort_file_size | Yes | Yes | Yes | Global | Yes | |

| myisam_mmap_size | Yes | Yes | Yes | Global | No | |

| myisam-recover | Yes | Yes | ||||

| - Variable: myisam_recover_options | ||||||

| myisam_recover_options | Yes | Global | No | |||

| myisam_repair_threads | Yes | Yes | Yes | Both | Yes | |

| myisam_sort_buffer_size | Yes | Yes | Yes | Both | Yes | |

| myisam_stats_method | Yes | Yes | Yes | Both | Yes | |

| myisam_use_mmap | Yes | Yes | Yes | Global | Yes | |

| skip-concurrent-insert | Yes | Yes | ||||

| - Variable: concurrent_insert | ||||||

| tmp_table_size | Yes | Yes | Yes | Both | Yes |

Set the mode for automatic recovery of crashed

MyISAMtables.Don't flush key buffers between writes for any

MyISAMtable.NoteIf you do this, you should not access

MyISAMtables from another program (such as from another MySQL server or with myisamchk) when the tables are in use. Doing so risks index corruption. Using--external-lockingdoes not eliminate this risk.

The following system variables affect the behavior of

MyISAM tables. For additional information, see

Section 5.1.4, “Server System Variables”.

The size of the tree cache used in bulk insert optimization.

NoteThis is a limit per thread!

The maximum size of the temporary file that MySQL is permitted to use while re-creating a

MyISAMindex (duringREPAIR TABLE,ALTER TABLE, orLOAD DATA INFILE). If the file size would be larger than this value, the index is created using the key cache instead, which is slower. The value is given in bytes.Set the size of the buffer used when recovering tables.

Automatic recovery is activated if you start

mysqld with the

--myisam-recover option. In this

case, when the server opens a MyISAM table, it

checks whether the table is marked as crashed or whether the open

count variable for the table is not 0 and you are running the

server with external locking disabled. If either of these

conditions is true, the following happens:

The server checks the table for errors.

If the server finds an error, it tries to do a fast table repair (with sorting and without re-creating the data file).

If the repair fails because of an error in the data file (for example, a duplicate-key error), the server tries again, this time re-creating the data file.

If the repair still fails, the server tries once more with the old repair option method (write row by row without sorting). This method should be able to repair any type of error and has low disk space requirements.

If the recovery wouldn't be able to recover all rows from

previously completed statements and you didn't specify

FORCE in the value of the

--myisam-recover option, automatic

repair aborts with an error message in the error log:

Error: Couldn't repair table: test.g00pages

If you specify FORCE, a warning like this is

written instead:

Warning: Found 344 of 354 rows when repairing ./test/g00pages

Note that if the automatic recovery value includes

BACKUP, the recovery process creates files with

names of the form

tbl_name-datetime.BAK

MyISAM tables use B-tree indexes. You can

roughly calculate the size for the index file as

(key_length+4)/0.67, summed over all keys. This

is for the worst case when all keys are inserted in sorted order

and the table doesn't have any compressed keys.

String indexes are space compressed. If the first index part is a

string, it is also prefix compressed. Space compression makes the

index file smaller than the worst-case figure if a string column

has a lot of trailing space or is a

VARCHAR column that is not always

used to the full length. Prefix compression is used on keys that

start with a string. Prefix compression helps if there are many

strings with an identical prefix.

In MyISAM tables, you can also prefix compress

numbers by specifying the PACK_KEYS=1 table

option when you create the table. Numbers are stored with the high

byte first, so this helps when you have many integer keys that

have an identical prefix.

MyISAM supports three different storage

formats. Two of them, fixed and dynamic format, are chosen

automatically depending on the type of columns you are using. The

third, compressed format, can be created only with the

myisampack utility (see

Section 4.6.5, “myisampack — Generate Compressed, Read-Only MyISAM Tables”).

When you use CREATE TABLE or

ALTER TABLE for a table that has no

BLOB or

TEXT columns, you can force the

table format to FIXED or

DYNAMIC with the ROW_FORMAT

table option.

See Section 13.1.17, “CREATE TABLE Syntax”, for information about

ROW_FORMAT.

You can decompress (unpack) compressed MyISAM

tables using myisamchk

--unpack; see

Section 4.6.3, “myisamchk — MyISAM Table-Maintenance Utility”, for more information.

Static format is the default for MyISAM

tables. It is used when the table contains no variable-length

columns (VARCHAR,

VARBINARY,

BLOB, or

TEXT). Each row is stored using a

fixed number of bytes.

Of the three MyISAM storage formats, static

format is the simplest and most secure (least subject to

corruption). It is also the fastest of the on-disk formats due

to the ease with which rows in the data file can be found on

disk: To look up a row based on a row number in the index,

multiply the row number by the row length to calculate the row

position. Also, when scanning a table, it is very easy to read a

constant number of rows with each disk read operation.

The security is evidenced if your computer crashes while the

MySQL server is writing to a fixed-format

MyISAM file. In this case,

myisamchk can easily determine where each row

starts and ends, so it can usually reclaim all rows except the

partially written one. Note that MyISAM table

indexes can always be reconstructed based on the data rows.

Fixed-length row format is only available for tables without

BLOB or

TEXT columns. Creating a table

with these columns with an explicit

ROW_FORMAT clause will not raise an error

or warning; the format specification will be ignored.

Static-format tables have these characteristics:

CHARandVARCHARcolumns are space-padded to the specified column width, although the column type is not altered.BINARYandVARBINARYcolumns are padded with0x00bytes to the column width.Very quick.

Easy to cache.

Easy to reconstruct after a crash, because rows are located in fixed positions.

Reorganization is unnecessary unless you delete a huge number of rows and want to return free disk space to the operating system. To do this, use

OPTIMIZE TABLEor myisamchk -r.Usually require more disk space than dynamic-format tables.

Dynamic storage format is used if a MyISAM

table contains any variable-length columns

(VARCHAR,

VARBINARY,

BLOB, or

TEXT), or if the table was

created with the ROW_FORMAT=DYNAMIC table

option.

Dynamic format is a little more complex than static format because each row has a header that indicates how long it is. A row can become fragmented (stored in noncontiguous pieces) when it is made longer as a result of an update.

You can use OPTIMIZE TABLE or

myisamchk -r to defragment a table. If you

have fixed-length columns that you access or change frequently

in a table that also contains some variable-length columns, it

might be a good idea to move the variable-length columns to

other tables just to avoid fragmentation.

Dynamic-format tables have these characteristics:

All string columns are dynamic except those with a length less than four.

Each row is preceded by a bitmap that indicates which columns contain the empty string (for string columns) or zero (for numeric columns). Note that this does not include columns that contain

NULLvalues. If a string column has a length of zero after trailing space removal, or a numeric column has a value of zero, it is marked in the bitmap and not saved to disk. Nonempty strings are saved as a length byte plus the string contents.Much less disk space usually is required than for fixed-length tables.

Each row uses only as much space as is required. However, if a row becomes larger, it is split into as many pieces as are required, resulting in row fragmentation. For example, if you update a row with information that extends the row length, the row becomes fragmented. In this case, you may have to run

OPTIMIZE TABLEor myisamchk -r from time to time to improve performance. Use myisamchk -ei to obtain table statistics.More difficult than static-format tables to reconstruct after a crash, because rows may be fragmented into many pieces and links (fragments) may be missing.

The expected row length for dynamic-sized rows is calculated using the following expression:

3 + (

number of columns+ 7) / 8 + (number of char columns) + (packed size of numeric columns) + (length of strings) + (number of NULL columns+ 7) / 8There is a penalty of 6 bytes for each link. A dynamic row is linked whenever an update causes an enlargement of the row. Each new link is at least 20 bytes, so the next enlargement probably goes in the same link. If not, another link is created. You can find the number of links using myisamchk -ed. All links may be removed with

OPTIMIZE TABLEor myisamchk -r.

Compressed storage format is a read-only format that is generated with the myisampack tool. Compressed tables can be uncompressed with myisamchk.

Compressed tables have the following characteristics:

Compressed tables take very little disk space. This minimizes disk usage, which is helpful when using slow disks (such as CD-ROMs).

Each row is compressed separately, so there is very little access overhead. The header for a row takes up one to three bytes depending on the biggest row in the table. Each column is compressed differently. There is usually a different Huffman tree for each column. Some of the compression types are:

Suffix space compression.

Prefix space compression.

Numbers with a value of zero are stored using one bit.

If values in an integer column have a small range, the column is stored using the smallest possible type. For example, a

BIGINTcolumn (eight bytes) can be stored as aTINYINTcolumn (one byte) if all its values are in the range from-128to127.If a column has only a small set of possible values, the data type is converted to

ENUM.A column may use any combination of the preceding compression types.

Can be used for fixed-length or dynamic-length rows.

While a compressed table is read only, and you cannot

therefore update or add rows in the table, DDL (Data

Definition Language) operations are still valid. For example,

you may still use DROP to drop the table,

and TRUNCATE TABLE to empty the

table.

The file format that MySQL uses to store data has been extensively tested, but there are always circumstances that may cause database tables to become corrupted. The following discussion describes how this can happen and how to handle it.

Even though the MyISAM table format is very

reliable (all changes to a table made by an SQL statement are

written before the statement returns), you can still get

corrupted tables if any of the following events occur:

The mysqld process is killed in the middle of a write.

An unexpected computer shutdown occurs (for example, the computer is turned off).

Hardware failures.

You are using an external program (such as myisamchk) to modify a table that is being modified by the server at the same time.

A software bug in the MySQL or

MyISAMcode.

Typical symptoms of a corrupt table are:

You get the following error while selecting data from the table:

Incorrect key file for table: '...'. Try to repair it

Queries don't find rows in the table or return incomplete results.

You can check the health of a MyISAM table

using the CHECK TABLE statement,

and repair a corrupted MyISAM table with

REPAIR TABLE. When

mysqld is not running, you can also check or

repair a table with the myisamchk command.

See Section 13.7.2.3, “CHECK TABLE Syntax”,

Section 13.7.2.6, “REPAIR TABLE Syntax”, and Section 4.6.3, “myisamchk — MyISAM Table-Maintenance Utility”.

If your tables become corrupted frequently, you should try to

determine why this is happening. The most important thing to

know is whether the table became corrupted as a result of a

server crash. You can verify this easily by looking for a recent

restarted mysqld message in the error log. If

there is such a message, it is likely that table corruption is a

result of the server dying. Otherwise, corruption may have

occurred during normal operation. This is a bug. You should try

to create a reproducible test case that demonstrates the

problem. See Section B.5.4.2, “What to Do If MySQL Keeps Crashing”, and

Section 22.4, “Debugging and Porting MySQL”.

Each MyISAM index file

(.MYI file) has a counter in the header

that can be used to check whether a table has been closed

properly. If you get the following warning from

CHECK TABLE or

myisamchk, it means that this counter has

gone out of sync:

clients are using or haven't closed the table properly

This warning doesn't necessarily mean that the table is corrupted, but you should at least check the table.

The counter works as follows:

The first time a table is updated in MySQL, a counter in the header of the index files is incremented.

The counter is not changed during further updates.

When the last instance of a table is closed (because a

FLUSH TABLESoperation was performed or because there is no room in the table cache), the counter is decremented if the table has been updated at any point.When you repair the table or check the table and it is found to be okay, the counter is reset to zero.

To avoid problems with interaction with other processes that might check the table, the counter is not decremented on close if it was zero.

In other words, the counter can become incorrect only under these conditions:

A

MyISAMtable is copied without first issuingLOCK TABLESandFLUSH TABLES.MySQL has crashed between an update and the final close. (Note that the table may still be okay, because MySQL always issues writes for everything between each statement.)

A table was modified by myisamchk --recover or myisamchk --update-state at the same time that it was in use by mysqld.

Multiple mysqld servers are using the table and one server performed a

REPAIR TABLEorCHECK TABLEon the table while it was in use by another server. In this setup, it is safe to useCHECK TABLE, although you might get the warning from other servers. However,REPAIR TABLEshould be avoided because when one server replaces the data file with a new one, this is not known to the other servers.In general, it is a bad idea to share a data directory among multiple servers. See Section 5.3, “Running Multiple MySQL Instances on One Machine”, for additional discussion.

- 14.6.1 Introduction to InnoDB

- 14.6.2 InnoDB Configuration

- 14.6.3 InnoDB Concepts and Architecture

- 14.6.4 InnoDB Tablespace Management

- 14.6.5 InnoDB Table Management

- 14.6.6 InnoDB Disk I/O and File Space Management

- 14.6.7 InnoDB Startup Options and System Variables

- 14.6.8 InnoDB Performance Tuning Tips

- 14.6.9 InnoDB Monitors

- 14.6.10 InnoDB Backup and Recovery

- 14.6.11 InnoDB and MySQL Replication

- 14.6.12 InnoDB Troubleshooting

Key Advantages of InnoDB

InnoDB is a high-reliability and high-performance

storage engine for MySQL. Key advantages of

InnoDB include:

Its design follows the ACID model, with transactions featuring commit, rollback, and crash-recovery capabilities to protect user data.

Row-level locking (without escalation to coarser granularity locks) and Oracle-style consistent reads increase multi-user concurrency and performance.

InnoDBtables arrange your data on disk to optimize common queries based on primary keys. EachInnoDBtable has a primary key index called the clustered index that organizes the data to minimize I/O for primary key lookups.To maintain data integrity,

InnoDBalso supportsFOREIGN KEYreferential-integrity constraints.You can freely mix

InnoDBtables with tables from other MySQL storage engines, even within the same statement. For example, you can use a join operation to combine data fromInnoDBandMEMORYtables in a single query.InnoDBhas been designed for CPU efficiency and maximum performance when processing large data volumes.

To determine whether your server supports InnoDB

use the SHOW ENGINES statement. See

Section 13.7.5.17, “SHOW ENGINES Syntax”.

Table 14.4 InnoDB Storage Engine Features

| Storage limits | 64TB | Transactions | Yes | Locking granularity | Row |

| MVCC | Yes | Geospatial data type support | Yes | Geospatial indexing support | Yes[a] |

| B-tree indexes | Yes | T-tree indexes | No | Hash indexes | No[b] |

| Full-text search indexes | Yes[c] | Clustered indexes | Yes | Data caches | Yes |

| Index caches | Yes | Compressed data | Yes[d] | Encrypted data[e] | Yes |

| Cluster database support | No | Replication support[f] | Yes | Foreign key support | Yes |

| Backup / point-in-time recovery[g] | Yes | Query cache support | Yes | Update statistics for data dictionary | Yes |

|

[a] InnoDB support for geospatial indexing is available in MySQL 5.7.5 and higher. [b] InnoDB utilizes hash indexes internally for its Adaptive Hash Index feature. [c] InnoDB support for FULLTEXT indexes is available in MySQL 5.6.4 and higher. [d] Compressed InnoDB tables require the InnoDB Barracuda file format. [e] Implemented in the server (via encryption functions), rather than in the storage engine. [f] Implemented in the server, rather than in the storage engine. [g] Implemented in the server, rather than in the storage engine. | |||||

The InnoDB storage engine maintains its own

buffer pool for caching data and indexes in main memory.

InnoDB stores its tables and indexes in a

tablespace, which may consist of several files (or raw disk

partitions). This is different from, for example,

MyISAM tables where each table is stored using

separate files. InnoDB tables can be very large

even on operating systems where file size is limited to 2GB.

The Windows Essentials installer makes InnoDB the

MySQL default storage engine on Windows, if the server being

installed supports InnoDB.

To compare the features of InnoDB with other

storage engines provided with MySQL, see the Storage

Engine Features table in

Chapter 14, Storage Engines.

The InnoDB Plugin for MySQL

At the 2008 MySQL User Conference, Innobase announced availability of an

InnoDBPlugin for MySQL. This plugin for MySQL exploits the “pluggable storage engine” architecture of MySQL, to permit users to replace the “built-in” version ofInnoDBin MySQL 5.1.As of MySQL 5.1.38, the

InnoDB Pluginis included in MySQL 5.1 releases, in addition to the built-in version ofInnoDBthat has been included in previous releases. MySQL 5.1.42 through 5.1.45 includeInnoDB Plugin1.0.6, which is considered of Release Candidate (RC) quality. MySQL 5.1.46 and up includeInnoDB Plugin1.0.7 or higher, which is considered of General Availability (GA) quality.Prior to MySQL Cluster NDB 7.1.11, MySQL Cluster was not compatible with the

InnoDB Plugin.The

InnoDB Pluginoffers new features, improved performance and scalability, enhanced reliability and new capabilities for flexibility and ease of use. Among the features of theInnoDB Pluginare “Fast index creation,” table and index compression, file format management, newINFORMATION_SCHEMAtables, capacity tuning, multiple background I/O threads, and group commit.The

InnoDB Pluginis included in source and binary distributions, except RHEL3, RHEL4, SuSE 9 (x86, x86_64, ia64), and generic Linux RPM packages.For instructions on replacing the built-in version of

InnoDBwithInnoDB Plugin, see Section 14.6.2.1, “Using InnoDB Plugin Instead of the Built-In InnoDB”.

MySQL Enterprise Backup and InnoDB

The MySQL Enterprise Backup product lets you back up a running MySQL

database, including InnoDB and

MyISAM tables, with minimal disruption to

operations while producing a consistent snapshot of the database.

When MySQL Enterprise Backup is copying InnoDB

tables, reads and writes to both InnoDB and

MyISAM tables can continue. During the copying of

MyISAM and other non-InnoDB tables, reads (but

not writes) to those tables are permitted. In addition, MySQL

Enterprise Backup can create compressed backup files, and back up

subsets of InnoDB tables. In conjunction with the

MySQL binary log, you can perform point-in-time recovery. MySQL

Enterprise Backup is included as part of the MySQL Enterprise

subscription.

For a more complete description of MySQL Enterprise Backup, see Section 23.2, “MySQL Enterprise Backup”.

Additional Resources

For

InnoDB-related terms and definitions, see MySQL Glossary.A forum dedicated to the

InnoDBstorage engine is available here: MySQL Forums::InnoDB.InnoDBis published under the same GNU GPL License Version 2 (of June 1991) as MySQL. For more information on MySQL licensing, see http://www.mysql.com/company/legal/licensing/.

The first decisions to make about InnoDB configuration involve how to lay out InnoDB data files, and how much memory to allocate for the InnoDB storage engine. You record these choices either by recording them in a configuration file that MySQL reads at startup, or by specifying them as command-line options in a startup script. The full list of options, descriptions, and allowed parameter values is at Section 14.6.7, “InnoDB Startup Options and System Variables”.

Overview of InnoDB Tablespace and Log Files

Two important disk-based resources managed by the

InnoDB storage engine are its tablespace data

files and its log files. If you specify no InnoDB

configuration options, MySQL creates an auto-extending data file,

slightly larger than 10MB, named ibdata1 and

two log files named ib_logfile0 and

ib_logfile1 in the MySQL data directory. Their

size is given by the size of the

innodb_log_file_size system

variable. To get good performance, explicitly provide

InnoDB parameters as discussed in the following

examples. Naturally, edit the settings to suit your hardware and

requirements.

The examples shown here are representative. See

Section 14.6.7, “InnoDB Startup Options and System Variables” for additional information about

InnoDB-related configuration parameters.

Considerations for Storage Devices

In some cases, database performance improves if the data is not all

placed on the same physical disk. Putting log files on a different

disk from data is very often beneficial for performance. The example

illustrates how to do this. It places the two data files on

different disks and places the log files on the third disk.

InnoDB fills the tablespace beginning with the

first data file. You can also use raw disk partitions (raw devices)

as InnoDB data files, which may speed up I/O. See

Section 14.6.4.4, “Using Raw Disk Partitions for the Shared Tablespace”.

InnoDB is a transaction-safe (ACID compliant)

storage engine for MySQL that has commit, rollback, and

crash-recovery capabilities to protect user data.

However, it cannot do so if the

underlying operating system or hardware does not work as

advertised. Many operating systems or disk subsystems may delay or

reorder write operations to improve performance. On some operating

systems, the very fsync() system call that

should wait until all unwritten data for a file has been flushed

might actually return before the data has been flushed to stable

storage. Because of this, an operating system crash or a power

outage may destroy recently committed data, or in the worst case,

even corrupt the database because of write operations having been

reordered. If data integrity is important to you, perform some

“pull-the-plug” tests before using anything in

production. On Mac OS X 10.3 and up, InnoDB

uses a special fcntl() file flush method. Under

Linux, it is advisable to disable the

write-back cache.

On ATA/SATA disk drives, a command such hdparm -W0

/dev/hda may work to disable the write-back cache.

Beware that some drives or disk controllers

may be unable to disable the write-back cache.

With regard to InnoDB recovery capabilities

that protect user data, InnoDB uses a file

flush technique involving a structure called the

doublewrite buffer,

which is enabled by default

(innodb_doublewrite=ON). The

doublewrite buffer adds safety to recovery following a crash or

power outage, and improves performance on most varieties of Unix

by reducing the need for fsync() operations. It

is recommended that the

innodb_doublewrite option remains

enabled if you are concerned with data integrity or possible

failures. For additional information about the doublewrite buffer,

see Section 14.6.6, “InnoDB Disk I/O and File Space Management”.

If reliability is a consideration for your data, do not configure

InnoDB to use data files or log files on NFS

volumes. Potential problems vary according to OS and version of

NFS, and include such issues as lack of protection from

conflicting writes, and limitations on maximum file sizes.

Specifying the Location and Size for InnoDB Tablespace Files

To set up the InnoDB tablespace files, use the

innodb_data_file_path option in the

[mysqld] section of the

my.cnf option file. On Windows, you can use

my.ini instead. The value of

innodb_data_file_path should be a

list of one or more data file specifications. If you name more than

one data file, separate them by semicolon

(“;”) characters:

innodb_data_file_path=datafile_spec1[;datafile_spec2]...

For example, the following setting explicitly creates a tablespace having the same characteristics as the default:

[mysqld] innodb_data_file_path=ibdata1:10M:autoextend

This setting configures a single 10MB data file named

ibdata1 that is auto-extending. No location for

the file is given, so by default, InnoDB creates

it in the MySQL data directory.

Sizes are specified using K,

M, or G suffix letters to

indicate units of KB, MB, or GB.

A tablespace containing a fixed-size 50MB data file named

ibdata1 and a 50MB auto-extending file named

ibdata2 in the data directory can be configured

like this:

[mysqld] innodb_data_file_path=ibdata1:50M;ibdata2:50M:autoextend

The full syntax for a data file specification includes the file name, its size, and several optional attributes:

file_name:file_size[:autoextend[:max:max_file_size]]

The autoextend and max

attributes can be used only for the last data file in the

innodb_data_file_path line.

If you specify the autoextend option for the last

data file, InnoDB extends the data file if it

runs out of free space in the tablespace. The increment is 8MB at a

time by default. To modify the increment, change the

innodb_autoextend_increment system

variable.

If the disk becomes full, you might want to add another data file on another disk. For tablespace reconfiguration instructions, see Section 14.6.4.3, “Changing the Number or Size of InnoDB Log Files and Resizing the InnoDB Tablespace”.

InnoDB is not aware of the file system maximum

file size, so be cautious on file systems where the maximum file

size is a small value such as 2GB. To specify a maximum size for an

auto-extending data file, use the max attribute

following the autoextend attribute. Use the

max attribute only in cases where constraining

disk usage is of critical importance, because exceeding the maximum

size causes a fatal error, possibly including a crash. The following

configuration permits ibdata1 to grow up to a

limit of 500MB:

[mysqld] innodb_data_file_path=ibdata1:10M:autoextend:max:500M

InnoDB creates tablespace files in the MySQL data

directory by default. To specify a location explicitly, use the

innodb_data_home_dir option. For

example, to use two files named ibdata1 and

ibdata2 but create them in the

/ibdata directory, configure

InnoDB like this:

[mysqld] innodb_data_home_dir = /ibdata innodb_data_file_path=ibdata1:50M;ibdata2:50M:autoextend

InnoDB does not create directories, so make

sure that the /ibdata directory exists before

you start the server. This is also true of any log file

directories that you configure. Use the Unix or DOS

mkdir command to create any necessary

directories.

Make sure that the MySQL server has the proper access rights to create files in the data directory. More generally, the server must have access rights in any directory where it needs to create data files or log files.

InnoDB forms the directory path for each data

file by textually concatenating the value of

innodb_data_home_dir to the data

file name, adding a path name separator (slash or backslash) between

values if necessary. If the

innodb_data_home_dir option is not

specified in my.cnf at all, the default value

is the “dot” directory ./, which

means the MySQL data directory. (The MySQL server changes its

current working directory to its data directory when it begins

executing.)

If you specify innodb_data_home_dir

as an empty string, you can specify absolute paths for the data

files listed in the

innodb_data_file_path value. The

following example is equivalent to the preceding one:

[mysqld] innodb_data_home_dir = innodb_data_file_path=/ibdata/ibdata1:50M;/ibdata/ibdata2:50M:autoextend

Specifying InnoDB Configuration Options

Sample my.cnf file for

small systems. Suppose that you have a computer with

512MB RAM and one hard disk. The following example shows possible

configuration parameters in my.cnf or

my.ini for InnoDB, including

the autoextend attribute. The example suits most

users, both on Unix and Windows, who do not want to distribute

InnoDB data files and log files onto several

disks. It creates an auto-extending data file

ibdata1 and two InnoDB log

files ib_logfile0 and

ib_logfile1 in the MySQL data directory.

[mysqld] # You can write your other MySQL server options here # ... # Data files must be able to hold your data and indexes. # Make sure that you have enough free disk space. innodb_data_file_path = ibdata1:10M:autoextend # # Set buffer pool size to 50-80% of your computer's memory innodb_buffer_pool_size=256M innodb_additional_mem_pool_size=20M # # Set the log file size to about 25% of the buffer pool size innodb_log_file_size=64M innodb_log_buffer_size=8M # innodb_flush_log_at_trx_commit=1

Note that data files must be less than 2GB in some file systems. The combined size of the log files must be less than 4GB. The combined size of data files must be at least slightly larger than 10MB.

Setting Up the InnoDB System Tablespace

When you create an InnoDB system tablespace for

the first time, it is best that you start the MySQL server from the

command prompt. InnoDB then prints the

information about the database creation to the screen, so you can

see what is happening. For example, on Windows, if

mysqld is located in C:\Program

Files\MySQL\MySQL Server 5.1\bin, you can

start it like this:

C:\> "C:\Program Files\MySQL\MySQL Server 5.1\bin\mysqld" --console

If you do not send server output to the screen, check the server's

error log to see what InnoDB prints during the

startup process.

For an example of what the information displayed by

InnoDB should look like, see

Section 14.6.4.1, “Creating the InnoDB Tablespace”.

Editing the MySQL Configuration File

You can place InnoDB options in the

[mysqld] group of any option file that your

server reads when it starts. The locations for option files are

described in Section 4.2.6, “Using Option Files”.

If you installed MySQL on Windows using the installation and

configuration wizards, the option file will be the

my.ini file located in your MySQL installation

directory. See Section 2.3.5.1, “Starting the MySQL Server Instance Config Wizard”.

If your PC uses a boot loader where the C:

drive is not the boot drive, your only option is to use the

my.ini file in your Windows directory

(typically C:\WINDOWS). You can use the

SET command at the command prompt in a console

window to print the value of WINDIR:

C:\> SET WINDIR

windir=C:\WINDOWS

To make sure that mysqld reads options only from

a specific file, use the

--defaults-file option as the first

option on the command line when starting the server:

mysqld --defaults-file=your_path_to_my_cnf

Sample my.cnf file for

large systems. Suppose that you have a Linux computer

with 2GB RAM and three 60GB hard disks at directory paths

/, /dr2 and

/dr3. The following example shows possible

configuration parameters in my.cnf for

InnoDB.

[mysqld] # You can write your other MySQL server options here # ... innodb_data_home_dir = # # Data files must be able to hold your data and indexes innodb_data_file_path = /db/ibdata1:2000M;/dr2/db/ibdata2:2000M:autoextend # # Set buffer pool size to 50-80% of your computer's memory, # but make sure on Linux x86 total memory usage is < 2GB innodb_buffer_pool_size=1G innodb_additional_mem_pool_size=20M innodb_log_group_home_dir = /dr3/iblogs # # Set the log file size to about 25% of the buffer pool size innodb_log_file_size=250M innodb_log_buffer_size=8M # innodb_flush_log_at_trx_commit=1 innodb_lock_wait_timeout=50 # # Uncomment the next line if you want to use it #innodb_thread_concurrency=5

Determining the Maximum Memory Allocation for InnoDB

On 32-bit GNU/Linux x86, be careful not to set memory usage too

high. glibc may permit the process heap to grow

over thread stacks, which crashes your server. It is a risk if the

value of the following expression is close to or exceeds 2GB:

innodb_buffer_pool_size + key_buffer_size + max_connections*(sort_buffer_size+read_buffer_size+binlog_cache_size) + max_connections*2MB

Each thread uses a stack (often 2MB, but only 256KB in MySQL

binaries provided by Oracle Corporation.) and in the worst case

also uses sort_buffer_size + read_buffer_size

additional memory.

Tuning other mysqld server parameters. The following values are typical and suit most users:

[mysqld]

skip-external-locking

max_connections=200

read_buffer_size=1M

sort_buffer_size=1M

#

# Set key_buffer to 5 - 50% of your RAM depending on how much

# you use MyISAM tables, but keep key_buffer_size + InnoDB

# buffer pool size < 80% of your RAM

key_buffer_size=value

On Linux, if the kernel is enabled for large page support,

InnoDB can use large pages to allocate memory for

its buffer pool and additional memory pool. See

Section 8.9.7, “Enabling Large Page Support”.

Enabling the InnoDB Plugin

If you want to use InnoDB Plugin rather than the

built-in version of InnoDB, to get the latest

performance improvements and new features such as table compression

and fast index creation, see

Section 14.6.2.1, “Using InnoDB Plugin Instead of the Built-In InnoDB”.

Turning Off InnoDB

If you do not want to use InnoDB tables, start

the server with the

--innodb=OFF or

--skip-innodb

option to disable the InnoDB storage engine. In

this case, the server will not start if the default storage engine

is set to InnoDB. Use

--default-storage-engine to set the

default to some other engine if necessary.

To use InnoDB Plugin in MySQL 5.1,

you must disable the built-in version of InnoDB

that is also included and instruct the server to use

InnoDB Plugin instead. To accomplish this, use

the following lines in your my.cnf file:

[mysqld] ignore-builtin-innodb plugin-load=innodb=ha_innodb_plugin.so

For the plugin-load option,

innodb is the name to associate with the plugin

and ha_innodb_plugin.so is the name of the

shared object library that contains the plugin code. The extension

of .so applies for Unix (and similar)

systems. For HP-UX on HPPA (11.11) or Windows, the extension

should be .sl or .dll,

respectively, rather than .so.

If the server has problems finding the plugin when it starts up,

specify the pathname to the plugin directory. For example, if

plugins are located in the lib/mysql/plugin

directory under the MySQL installation directory and you have

installed MySQL at /usr/local/mysql, use

these lines in your my.cnf file:

[mysqld] ignore-builtin-innodb plugin-load=innodb=ha_innodb_plugin.so plugin_dir=/usr/local/mysql/lib/mysql/plugin

The previous examples show how to activate the storage engine part

of InnoDB Plugin, but the plugin also

implements several InnoDB-related

INFORMATION_SCHEMA tables. (For information

about these tables, see

InnoDB INFORMATION_SCHEMA Tables.) To enable these

tables, include additional

name=libraryplugin-load option:

[mysqld] ignore-builtin-innodb plugin-load=innodb=ha_innodb_plugin.so ;innodb_trx=ha_innodb_plugin.so ;innodb_locks=ha_innodb_plugin.so ;innodb_lock_waits=ha_innodb_plugin.so ;innodb_cmp=ha_innodb_plugin.so ;innodb_cmp_reset=ha_innodb_plugin.so ;innodb_cmpmem=ha_innodb_plugin.so ;innodb_cmpmem_reset=ha_innodb_plugin.so

The plugin-load option value as

shown here is formatted on multiple lines for display purposes but

should be written in my.cnf using a single

line without spaces in the option value. On Windows, substitute

.dll for each instance of the

.so extension.

After the server starts, verify that InnoDB

Plugin has been loaded by using the

SHOW PLUGINS statement. For

example, if you have loaded the storage engine and the

INFORMATION_SCHEMA tables, the output should

include lines similar to these:

mysql> SHOW PLUGINS;

+---------------------+--------+--------------------+...

| Name | Status | Type |...

+---------------------+--------+--------------------+...

...

| InnoDB | ACTIVE | STORAGE ENGINE |...

| INNODB_TRX | ACTIVE | INFORMATION SCHEMA |...

| INNODB_LOCKS | ACTIVE | INFORMATION SCHEMA |...

| INNODB_LOCK_WAITS | ACTIVE | INFORMATION SCHEMA |...

| INNODB_CMP | ACTIVE | INFORMATION SCHEMA |...

| INNODB_CMP_RESET | ACTIVE | INFORMATION SCHEMA |...

| INNODB_CMPMEM | ACTIVE | INFORMATION SCHEMA |...

| INNODB_CMPMEM_RESET | ACTIVE | INFORMATION SCHEMA |...

+---------------------+--------+--------------------+...

An alternative to using the

plugin-load option at server

startup is to use the INSTALL

PLUGIN statement at runtime. First start the server with

the ignore-builtin-innodb option to

disable the built-in version of InnoDB:

[mysqld] ignore-builtin-innodb

Then issue an INSTALL PLUGIN

statement for each plugin that you want to load:

mysql>INSTALL PLUGIN InnoDB SONAME 'ha_innodb_plugin.so';mysql>INSTALL PLUGIN INNODB_TRX SONAME 'ha_innodb_plugin.so';mysql>INSTALL PLUGIN INNODB_LOCKS SONAME 'ha_innodb_plugin.so';...

INSTALL PLUGIN need be issued only

once for each plugin. Installed plugins will be loaded

automatically on subsequent server restarts.

If you build MySQL from a source distribution, InnoDB

Plugin is one of the storage engines that is built by

default. Build MySQL the way you normally do; for example, by

using the instructions at Section 2.11, “Installing MySQL from Source”.

After the build completes, you should find the plugin shared

object file under the storage/innodb_plugin

directory, and make install should install it

in the plugin directory. Configure MySQL to use InnoDB

Plugin as described earlier for binary distributions.

If you use gcc, InnoDB

Plugin cannot be compiled with gcc

3.x; you must use gcc 4.x instead.

In MySQL 5.5, the InnoDB Plugin is also

included, but it becomes the built-in version of

InnoDB in MySQL Server, replacing the version

previously included as the built-in InnoDB

engine. This means that if you use InnoDB

Plugin in MySQL 5.1 using the instructions just given,

you will need to remove

ignore-builtin-innodb and

plugin-load from your startup

options after an upgrade to MySQL 5.5 or the server will fail to

start.

- 14.6.3.1 The InnoDB Transaction Model and Locking

- 14.6.3.2 InnoDB Lock Modes

- 14.6.3.3 Consistent Nonlocking Reads

- 14.6.3.4 SELECT ... FOR UPDATE and SELECT ... LOCK IN SHARE MODE Locking Reads

- 14.6.3.5 InnoDB Record, Gap, and Next-Key Locks

- 14.6.3.6 Avoiding the Phantom Problem Using Next-Key Locking

- 14.6.3.7 Locks Set by Different SQL Statements in InnoDB

- 14.6.3.8 Implicit Transaction Commit and Rollback

- 14.6.3.9 Deadlock Detection and Rollback

- 14.6.3.10 How to Cope with Deadlocks

- 14.6.3.11 InnoDB Multi-Versioning

- 14.6.3.12 InnoDB Table and Index Structures

To implement a large-scale, busy, or highly reliable database application, to port substantial code from a different database system, or to tune MySQL performance, you must understand the notions of transactions and locking as they relate to the InnoDB storage engine.

In the InnoDB transaction model, the goal is to

combine the best properties of a multi-versioning database with

traditional two-phase locking. InnoDB does

locking on the row level and runs queries as nonlocking consistent

reads by default, in the style of Oracle. The lock information in

InnoDB is stored so space-efficiently that lock

escalation is not needed: Typically, several users are permitted

to lock every row in InnoDB tables, or any

random subset of the rows, without causing

InnoDB memory exhaustion.

In InnoDB, all user activity occurs inside a

transaction. If autocommit mode is enabled, each SQL statement

forms a single transaction on its own. By default, MySQL starts

the session for each new connection with autocommit enabled, so

MySQL does a commit after each SQL statement if that statement did

not return an error. If a statement returns an error, the commit

or rollback behavior depends on the error. See

Section 14.6.12.4, “InnoDB Error Handling”.

A session that has autocommit enabled can perform a

multiple-statement transaction by starting it with an explicit

START

TRANSACTION or

BEGIN statement

and ending it with a COMMIT or

ROLLBACK

statement. See Section 13.3.1, “START TRANSACTION, COMMIT, and ROLLBACK Syntax”.

If autocommit mode is disabled within a session with SET

autocommit = 0, the session always has a transaction

open. A COMMIT or

ROLLBACK

statement ends the current transaction and a new one starts.

A COMMIT means that the changes

made in the current transaction are made permanent and become

visible to other sessions. A

ROLLBACK

statement, on the other hand, cancels all modifications made by

the current transaction. Both

COMMIT and

ROLLBACK release

all InnoDB locks that were set during the

current transaction.

In terms of the SQL:1992 transaction isolation levels, the default

InnoDB level is

REPEATABLE READ.

InnoDB offers all four transaction isolation

levels described by the SQL standard:

READ UNCOMMITTED,

READ COMMITTED,

REPEATABLE READ, and

SERIALIZABLE.

A user can change the isolation level for a single session or for

all subsequent connections with the SET

TRANSACTION statement. To set the server's default

isolation level for all connections, use the

--transaction-isolation option on

the command line or in an option file. For detailed information

about isolation levels and level-setting syntax, see

Section 13.3.6, “SET TRANSACTION Syntax”.

In row-level locking, InnoDB normally uses

next-key locking. That means that besides index records,

InnoDB can also lock the “gap”

preceding an index record to block insertions by other sessions in

the gap immediately before the index record. A next-key lock

refers to a lock that locks an index record and the gap before it.

A gap lock refers to a lock that locks only the gap before some

index record.

For more information about row-level locking, and the circumstances under which gap locking is disabled, see Section 14.6.3.5, “InnoDB Record, Gap, and Next-Key Locks”.

InnoDB implements standard row-level locking

where there are two types of locks,

shared

(S) locks and

exclusive

(X) locks. For information about

record, gap, and next-key lock types, see

Section 14.6.3.5, “InnoDB Record, Gap, and Next-Key Locks”.

A shared (

S) lock permits a transaction to read a row.An exclusive (

X) lock permits a transaction to update or delete a row.

If transaction T1 holds a shared

(S) lock on row r,

then requests from some distinct transaction T2

for a lock on row r are handled as follows:

A request by

T2for anSlock can be granted immediately. As a result, bothT1andT2hold anSlock onr.A request by

T2for anXlock cannot be granted immediately.

If a transaction T1 holds an exclusive

(X) lock on row r, a

request from some distinct transaction T2 for a

lock of either type on r cannot be granted

immediately. Instead, transaction T2 has to

wait for transaction T1 to release its lock on

row r.

Intention Locks

Additionally, InnoDB supports

multiple granularity locking which permits

coexistence of record locks and locks on entire tables. To make

locking at multiple granularity levels practical, additional types

of locks called intention locks are used.

Intention locks are table locks in InnoDB. The

idea behind intention locks is for a transaction to indicate which

type of lock (shared or exclusive) it will require later for a row

in that table. There are two types of intention locks used in

InnoDB (assume that transaction

T has requested a lock of the indicated type on

table t):

Intention shared (

IS): TransactionTintends to setSlocks on individual rows in tablet.Intention exclusive (

IX): TransactionTintends to setXlocks on those rows.

For example, SELECT ...

LOCK IN SHARE MODE sets an IS

lock and SELECT ... FOR

UPDATE sets an IX lock.

The intention locking protocol is as follows:

Before a transaction can acquire an

Slock on a row in tablet, it must first acquire anISor stronger lock ont.Before a transaction can acquire an

Xlock on a row, it must first acquire anIXlock ont.

These rules can be conveniently summarized by means of the following lock type compatibility matrix.

X | IX | S | IS | |

|---|---|---|---|---|

X | Conflict | Conflict | Conflict | Conflict |

IX | Conflict | Compatible | Conflict | Compatible |

S | Conflict | Conflict | Compatible | Compatible |

IS | Conflict | Compatible | Compatible | Compatible |

A lock is granted to a requesting transaction if it is compatible with existing locks, but not if it conflicts with existing locks. A transaction waits until the conflicting existing lock is released. If a lock request conflicts with an existing lock and cannot be granted because it would cause deadlock, an error occurs.

Thus, intention locks do not block anything except full table

requests (for example, LOCK TABLES ... WRITE).

The main purpose of IX and

IS locks is to show that someone is

locking a row, or going to lock a row in the table.

Deadlock Example

The following example illustrates how an error can occur when a lock request would cause a deadlock. The example involves two clients, A and B.

First, client A creates a table containing one row, and then

begins a transaction. Within the transaction, A obtains an

S lock on the row by selecting it in

share mode:

mysql>CREATE TABLE t (i INT) ENGINE = InnoDB;Query OK, 0 rows affected (1.07 sec) mysql>INSERT INTO t (i) VALUES(1);Query OK, 1 row affected (0.09 sec) mysql>START TRANSACTION;Query OK, 0 rows affected (0.00 sec) mysql>SELECT * FROM t WHERE i = 1 LOCK IN SHARE MODE;+------+ | i | +------+ | 1 | +------+ 1 row in set (0.10 sec)

Next, client B begins a transaction and attempts to delete the row from the table:

mysql>START TRANSACTION;Query OK, 0 rows affected (0.00 sec) mysql>DELETE FROM t WHERE i = 1;

The delete operation requires an X

lock. The lock cannot be granted because it is incompatible with

the S lock that client A holds, so the

request goes on the queue of lock requests for the row and client

B blocks.

Finally, client A also attempts to delete the row from the table:

mysql> DELETE FROM t WHERE i = 1;